Understanding Wasm, Part 2: Whence Wasm

Understanding Wasm

Part 2: Whence Wasm

“Write once, run anywhere” is a great sales pitch. It grabs your attention. It’s pithy! It invites the reader to fill in the blanks of “who is writing what, where is it going, and how does it get there” with the answer that most appeals to them. This was Java’s sales pitch. WebAssembly seems to have the same goal. Given that Java still exists, why do we need WebAssembly? What makes them different?

I found that, in order to answer that question, I had to build some context for myself around the history of Java, Smalltalk, and JavaScript. Each of these languages presented not just a virtual machine, in the sense that we talked about in the last post, but a virtual platform: a vision for computing that required abstracting over the specifics of many different physical machines. Each language was conceived under vastly different market pressures with different ideas about who their primary audience was & how programs might be distributed. Each came to regard computing as a bridge to running their respective language. WebAssembly emerges from their stories by taking the opposite approach.

Java

Since we’re wondering out loud about “why we need Wasm if we have Java”, let’s start here.

If you’re unfamiliar with Java, it is a general-purpose, object-oriented programming language first released in January 1996 by Sun Microsystems, in concert with Netscape Navigator 2.0. Java resembles C++, at least superficially. I don’t think I’m overstepping by saying that Java’s object model looks most like what most new programmers first think C++’s object model looks like, before seeing the gory details where that model meets the machine.

class Greeter {

String msg;

public Greeter(String m) {

msg = m;

}

public String Greet() {

return msg + ', world!';

}

public static void main(String[] args) {

Greeter greeter = new Greeter("Hello");

System.out.println(greeter.Greet());

}

}The computer industry in 1996, at the time of Java’s release, looks strange from a modern point of view. The divide between professional hardware and consumer hardware was far more pronounced than it is today. Professional computers (“workstations”) typically had custom hardware and operating systems. At this point in time, a program might have to be compiled for several instruction set architectures (“ISAs”, per last post) — SPARC, PA-RISC, MIPS, and Alpha — and one of several operating systems, which were typically workstation-vendor specific: Silicon Graphic’s IRIX, Alpha’s VMS/Ultrix/Prism, SunOS, or Windows NT1. Personal computers, meanwhile, predominantly ran Windows on 32-bit Intel x86 processors. Apple clung to life, throwing Mac OS and IBM/Motorola PowerPC processors into the mix. Compiling, testing, and distributing software to each of these targets was a major hassle.

Then there was the web. ViolaWWW, Mosaic2, and eventually Netscape hinted of a future where all of these computers would communicate with each other not just via text and file download, but with rich, graphical applications. And this future was imminent.

Even though the Web had been around for 20 years or so, with FTP and telnet, it was difficult to use. Then Mosaic came out in 1993 as an easy-to-use front end to the Web, and that revolutionized people’s perceptions. The Internet was being transformed into exactly the network that we had been trying to convince the cable companies they ought to be building.

- James Gosling, “Happy 3rd Birthday, Java!”

Sun released the Java development toolkit for free, a language “built for the ’net”, so developers could “write once, run anywhere.” The Java Virtual Machine implementation — the Java Runtime — was implemented in C for each major combination of processor ISA and operating system. The specifics of each machine and operating system combination were to be hidden well enough from programs in the runtime environment that one could write a single implementation of an application in Java and ship it to every target.

Except, uh.

All the stuff we had wanted to do, in generalities, fit perfectly with the way applications were written, delivered, and used on the Internet. It was just an incredible accident. And it was patently obvious that the Internet and Java were a match made in heaven. So that’s what we did.

- James Gosling, “Happy 3rd Birthday, Java!”

Java wasn’t actually built for the net. It was built as part of “Project Green”3, a system for controlling embedded consumer home devices via radio: phones, VCRs, TVs, and door knobs. This was a four year-long moonshot4 which was near failure5 when lead technologist, Patrick Naughton, wrote a last-ditch business plan that targeted the PC market instead. He almost got fired for it. On the strength of a Java web browser demo, WebRunner, the plan was saved. This plan involved creating an ecosystem of Java developers while strategically partnering with Netscape to distribute the Java runtime. “Run anywhere” came to include “on any consumer’s PC, via the web.”

Sun stood to gain from this: they could license the language runtime to large providers like IBM, Apple, or Microsoft, build a base of developers for their nascent server market, and experiment more freely with hardware architecture on their workstations. Netscape, at the time, had something like a 90% share of the browser market, but was fending off Microsoft. Microsoft had approached Netscape in mid-1995 with a proposition for a “special relationship”: take Microsoft’s investment, become a preferred developer, and only ship Netscape Navigator to pre-Windows 95 operating systems. Stop competing with Microsoft’s Internet Explorer browser. Netscape needed an ally, and Sun wanted Microsoft’s share of the PC market.

They teamed up: Netscape and Sun co-designed a language meant for bootstrapping and orchestrating larger Java applets, meant for non-programmers, in order to prevent Microsoft from positioning Visual Basic in that space6. This language, originally called “LiveScript”, “Mocha”, and finally “JavaScript”, was inspired by Scheme, Self, and Hypertalk — about which, more later.

Netscape added an <applet> HTML tag in order to support Java and began

building a plugin interface to support embedding other media types7. The

<applet> tag would run Java in-process in an embedded Java Virtual Machine

implementation, while the plugin API (“Netscape Plugin API”, or

“NPAPI”) would allow developers to distribute plugins for other media types

as shared libraries. These libraries would be compiled to their audience’s

native ISA and operating system. With these additions, the web was no longer

static text — it was dynamic. Java caught on like wildfire.

The sudden appearance and rapid adoption of Java and Netscape caused Sun’s arch-enemy, Microsoft, great alarm8.

“Java Brews Trouble For Microsoft”, Nick Wingfield and Martin LaMonica, Infoworld Nov 95

Microsoft controlled the lion’s share of the PC market but were struggling to make inroads into the workstation market with Windows NT. They were aware of the web, but they were convinced they had time to build something better. Something they could control9. They called this “Project Blackbird”: a model for bringing their Object Linking and Embedding (“OLE”) API to the Web.

(You may be familiar with OLE or its related technologies, the Component Object Model (“COM”) and ActiveX; these are the APIs that allow embedding of rich content from one application to another in Windows, among other things. If you’ve ever pasted an Excel spreadsheet into a Word document, you’ve seen OLE at work.)

“Microsoft and Netscape open some new fronts in escalating Web Wars”, Bob Metcalfe, Infoworld Aug 95

Microsoft’s plan was to distribute signed OLE objects over the internet using MSN as their flagship example. However, it wasn’t set to ship until 1996 at the earliest, and Netscape had already made huge inroads by 1994.

Microsoft responded in character to the threat: they

included their browser10, Internet Explorer, for free11 in every copy of

Windows 95. Overnight, Netscape’s primary product had been reduced to a

feature of another, more popular product. Internet Explorer emulated NPAPI on

top of their own ActiveX plugin model, a remnant of the Project Blackbird plan.

They supported the <applet> tag by licensing the rights to build a Windows

Java runtime implementation (“MSJVM”) from Sun. This license included a

proviso that Microsoft were required to provide a complete implementation of

standard Java APIs. Sun was wary of Microsoft embracing, extending, and

extinguishing Java by diluting the language.

Netscape was forced to diversify their product line and, in the process, ran head-long into the limits of what was possible with Java at the time. Multiple rewrites of Netscape products from C and C++ into Java were abandoned. Java wasn’t a silver bullet: it wasn’t fast enough and the quality of virtual machine runtimes varied too greatly.

Sun was aware of, and acting on, the Java performance problem as early as 1997. They purchased a company working on an optimizing just-in-time compiler VM for another language, Smalltalk. Sun put the team to work on building a JIT VM, called “HotSpot”, for Java.

When the dust settled on the first browser war, Microsoft had a controlling stake of the browser market. The web would stagnate for years, while innovation mostly appeared via the window Netscape left open: NPAPI. Other plugins thrived; most notably, Flash.

In 1997, Sun brought suit against Microsoft: the MSJVM bundled into Windows was incomplete. Sun alleged Microsoft was up to its usual tricks: embracing the language, only to squeeze it out of existence. The lawsuit was eventually settled in 2002; Microsoft would remove MSJVM and require users to download a JVM plugin from Sun in order to support Java web applets. Other browser vendors were unwilling (or unable) to pay to license a Java runtime to vendor directly, and so Java was relegated to plugin status. End-users would have to find and download appropriate versions of the Java runtime whenever they wished to run an applet. This friction, on top of sluggish performance and poor browser integration, sealed Java’s fate as a web platform technology.

The HTML5 spec would later deprecate the applet, object, and embed tags

in favor of web-native solutions. Browser vendors wished to remove the NPAPI

plugin interface. NPAPI had become a major source of security bugs and a point

of divergence with mobile browsing.

Java’s problem as a web client was that it wanted to be it’s own platform. Java wasn’t well integrated into the HTML-based architecture of web browsers. Instead, Java treated the browser as simply another processor to host the Sun-controlled “write once, run [the same] everywhere” Java application platform.

It’s goal wasn’t to enhance native browser technology—it’s goal was to replace them.

- Allen Wirfs-Brock, “The Rise and Fall of Commercial Smalltalk”

Java would go on to become a popular language in server,

mobile12, and embedded contexts13. It even

runs on cellular SIM cards14! However, Sun (and later, Oracle) took

the language out of the running for the web platform through protective

licensing. The problems Java left in its wake, <applet>s and NPAPI, would

remain unsolved for years.

If Java had remained a part of the web platform, this post might have been about it15.

Smalltalk

I want to back up to the Smalltalk folks and their just-in-time compiler virtual machine. What’s Smalltalk?

The purpose of the Smalltalk project is to provide computer support for the creative spirit in everyone. […] If a system is to serve the creative spirit, it must be entirely comprehensible to a single individual.

- Dan Ingalls, “Design Principles of Smalltalk”

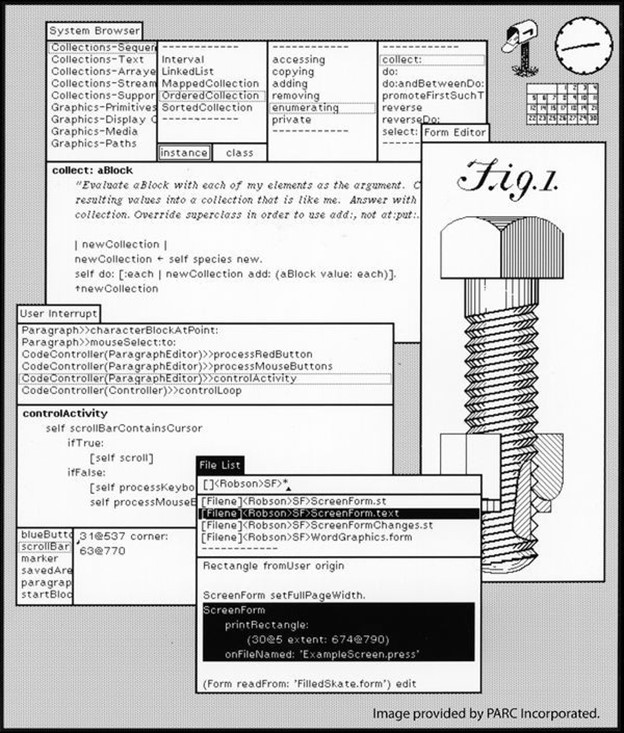

Java virtualized the machine in order to provide a stable language platform for developers out of a pragmatic need. Smalltalk virtualized the machine in service of a core metaphor out of an ideal: universal programming literacy. It wasn’t just a language: it was a consistent point of view that carried from the operating system through to every program running on the machine. There was no separation between operating system and program: there were only objects.

[M]illions of potential users meant that the user interface would have to become a learning environment along the lines of Montessori and Bruner[.]

- Alan Kay, “The Early History of Smalltalk”

This makes sense, given Smalltalk’s lofty goals and inspirations. The first version of Smalltalk was written in 1972, part of a vision for computing that included universal literacy. If you’ve heard Steve Jobs refer to the computer as a “bicycle for the mind”, this is where he got the idea.

via the Computer History Museum

via the Computer History Museum

[LISP] started a line of thought that said “take the hardest and most profound thing you need to do, make it great, and then build every easier thing out of it”. That was the promise of LISP and the lure of lambda— [all that was] needed was a better “hardest and most profound” thing. Objects should be it.

- Alan Kay, “The Early History of Smalltalk”

Smalltalk’s core metaphor was one of objects passing messages to other objects. Objects could be made to react as the programmer chose to any incoming message, whether that message type was known ahead of time or not. The metaphor was carefully chosen: when designing a class of object, the programmer was to put themselves in the shoes of the object itself; to think in terms of what the object “saw”. (Garbage collection, the automatic deletion of unused objects, fell out from this metaphor naturally: when an object was no longer visible from any other object, it must disappear. Thus, garbage collection was an inherent property of the Smalltalk environment.) It was an enormously flexible design, one that redefined “virtual machine” to include the entire operating system.

So why didn’t Smalltalk take over the world?

With 20/20 hindsight, we can see that from the pointy-headed boss perspective, the Smalltalk value proposition was:

Pay a lot of money to be locked in to slow software that exposes your IP, looks weird on screen and cannot interact well with anything else; it is much easier to maintain and develop though!

On top of that, a fair amount of bad luck.

- Gilad Bracha, “Bits of History, Words of Advice”16

Smalltalk’s flexibility came at a cost. Garbage collection cycles could be painfully slow17, the lack of rigidity made “programming in the large” difficult18, and the language-as-operating-system environment meant it was difficult to integrate into other operating systems. Gilad Bracha argues that one of the properties that sealed Smalltalk’s fate was its open nature. Programs were shipped as images containing all of the objects comprising the entire operating system. The intent was universal programming literacy, after all, so the system continued to be modifiable after the fact. The software market of the 1980s had gone a different direction: software was an immutable artifact produced by programmers and sold to consumers. Companies didn’t want to ship all of their valuable intellectual property along with the appliance they were selling.

So, what happened to Smalltalk?

The market for Smalltalk machines peaked in the 1980s. The language itself was most influential in what it inspired and invented. In addition to the Apple Lisa, the Xerox Alto also inspired Carnegie Melon University professor Raj Reddy to coin the term “3M computer” (“a Megabyte of memory, a Megapixel display, and a Million instructions per second — for less than a Megapenny, or $10K), which indirectly created the workstation market we talked about earlier19. The language itself inspired integrated development environments, debuggers, and entire windowing system features we take for granted today. The pedagogy Smalltalk developed for classrooms20 would go on to inspire one of the students in that classroom to invent HyperCard21.

But, most relevant to this discussion, Smalltalk contributed huge advances in optimizing virtual machines through its later dialects, Self and Strongtalk. I gestured at the creation of the HotSpot VM earlier. It was, in fact, the folks working on these two projects that Sun hired: among others, Gilad Bracha22, David Ungar23, Urs Hölzle and Lars Bak. Their work on improving the performance of Smalltalk would be adapted to improve the performance of bytecode virtual machines in general.

And what of Smalltalk? Java largely supplanted it, as some feared it would. Smalltalk wasn’t the right thing, but it pointed at the right thing.

Smalltalk did something more important than take over the world—it defined the shape of the world!

- Allen Wirfs-Brock, “The Rise and Fall of Commercial Smalltalk”

Which brings us, somewhat ironically, to JavaScript.

JavaScript

Nobody liked JavaScript24.

JavaScript was an unlikely survivor of the first browser war: a “toy” scripting language meant only to combat Visual Basic and coordinate the larger Java applets marshalled by websites. As it was first imagined, it lacked features considered core to other serious languages — or hid those features behind strange constructions. But it grew steadily, riding along with every browser shipped, forever backwards-compatible.

JavaScript as it existed until 2014 was an ugly language: the elegant object model of Self combined with function closures from Scheme integrated with document object models inspired by HyperTalk, all of which were slathered in a thick coat of Java syntax.

function Greeter(msg) {

if (!(this instanceof Greeter)) {

return new Greeter(msg)

}

this.msg = msg;

}

// All functions had a field, "prototype", that

// pointed at an object.

Greeter.prototype.greet = function () {

return this.msg + ', world!';

}

// When the function is invoked with "new", a

// new object would be created whose internal

// "[[Prototype]]" slot pointed at the

// function's ".prototype" object.

//

// We walked uphill both ways in the snow.

var obj = new Greeter("Hello");

// The language didn't ship with "console.log",

// or any way to log text, really.

//

// You had to use browser APIs, like "alert".

alert(obj.greet());But the language grew in fits and starts, subject at once to the pressure of the aims of giant corporations and to the expectations of the largest userbase in the world: every website. First, JavaScript became functional. Then JavaScript got fast — for the consumers. Then JavaScript got pretty — for programmers.

// JavaScript after 2014:

class Greeter {

constructor(msg) {

this.msg = msg;

}

greet() {

return `${this.msg}, world!`;

}

}

const obj = new Greeter("Hello");

console.log(obj.greet());In 1999, Internet Explorer shipped the second version of the MSXML ActiveX

library as part of Internet Explorer 5. There’s some irony here: Microsoft’s

Project Blackbird was a platform play to give them control of the nascent web

platform, implemented using ActiveX. MSXML ActiveX would give us AJAX –

“Asynchronous JavaScript and XML”, later standardized in the platform as

XMLHttpRequest. AJAX would unlock a new breed of web applications, from

webmail to maps. The second browser war kicked off. This time, Microsoft lost.

- 1998: The open-source Mozilla project was founded by former members of Netscape.

- 2001: “JavaScript Object Notation” (“JSON”) was “discovered” by Douglas Crockford at Yahoo.

- 2002: Mozilla releases Phoenix (now “Firefox”)

- 2004: Ruby on Rails released.

- 2005: Prototype, a polyfill/cross-document-model javascript framework, first released

- 2005: Git first released.

- 2006: Firebug, a JavaScript development plugin for Firefox browser, first released

- 2006: jQuery, a polyfill & cross-document-model development framework, first released

- 2007: Mootools, ditto.

- 2007: Google purchases Doubleclick.

- 2008: Google releases Chrome, a web browser with a high-performance JIT JavaScript VM, V8.

- 2008: GitHub launches.

- 2008: “JavaScript: The Good Parts” is released.

- 2009: Firefox 3.5 launches with TraceMonkey, a high-performance JIT JavaScript VM.

- 2009: Node.js, an evented JavaScript language platform powered by V8, first released.

To extract a theme: JavaScript as it existed between 1995 and 2009 did not ship with a particularly complete standard library, nor did it ship with any dedicated syntax for modularity. However, it was possible to build these libraries in JavaScript. And people did.

Microsoft lost this browser war, in fact, to Google, who emerged as a new, major player during this time period. They made money by serving ads ahead of search results; the faster (and safer) they could get relevant results in front of users, the more money they could make. Thus incentivized, they employed Lars Bak to translate the optimization techniques he perfected on the Java and Smalltalk VMs to JavaScript, creating the Chrome browser, powered by the V825 optimizing JavaScript runtime. Firefox, Safari and other browser vendors adopted these techniques. Microsoft’s 90’s business model demanded killing or compartmentalizing the web, Google’s demanded it grow the web. Chrome ended up winning the 2nd browser war in late 2012, overtaking Internet Explorer, Safari, and Firefox.

The newfound ubiquity of fast JavaScript VMs led, inevitably, to a renewed interest in using JavaScript as a “write once, run anywhere” platform. Fast JavaScript VMs spawned a number of server-side language platforms, cross-platform application development tools, and operating systems. Between 2008 and 2015 Node.js, Electron, React native, ChromeOS, webOS, and Tizen26 emerged. Node, in particular, revitalized the web platform: JavaScript bundling tools began to provide pure-JavaScript browser polyfills for Node’s functionality, and Node’s module standard, CommonJS, became the de facto pattern for modularity.

But, as with Java before it, these JavaScript platform tools hit limitations. There was an overhead to having to write all code in JavaScript; the memory usage of cross-platform applications regularly ballooned to incredible size, browser polyfills could only do so much, garbage collection pauses were still an issue — especially on embedded devices, bundlers produced huge payloads that mobile phones struggled to download and parse. And there was still no good way to move applications written in other languages over to the JavaScript platform short of rewriting them.

There were other pressures on the web through this time, however. Notably: the Dot Com bubble burst, Apple created a new computing market with the iPhone and iPad products, Google shipped the Android smartphone operating system12, Oracle acquired Sun Microsystems, and Microsoft found itself struggling to enter the new markets Apple had opened. The window Netscape left open for Java, NPAPI, attracted other plugins: Flash and Silverlight. Despite the functionality they unlocked, managing these plugins was difficult and perilous, pushing both dependency management and security vetting onto end users. A bug in a plugin could assume the full privileges of the user’s account on the computer, and any website (or frame within a website) could deliver an exploit for such a bug.

Thus, Apple famously doomed Flash by denying it access to the iPhone platform in favor of web platform technologies. The new mobile web would not have plugins.

JavaScript remains popular, fast, and ubiquitous today. And yet, at the turn of the ’10s, there was still no good alternative to NPAPI plugins for desktop browsers. Like Smalltalk, JavaScript was still difficult to program “in the large”; like Java, running existing software required a language port — companies with large C++ applications had to manually rewrite them in JavaScript. Google was particularly interested in solving this problem, having just launched its ChromeOS project, which would replace the traditional user-visible operating system layer with a web browser. They launched three projects: Dart27, PPAPI (“Pepper”), and Native Client (“NaCl”28, a pun on “Salt”.) While Dart was capable of compiling to JavaScript as a target, it lost some language capabilities in the process — it was clearly intended to replace JS long-term. PPAPI and NaCl endeavoured to harden the NPAPI plugin interface by running plugin ISA code in a virtual machine29. Competing browser vendors balked at the cost of supporting an entirely separate virtual machine; they were at an impasse.

Interlude: Virtual ISAs

What was happening with computer hardware while all this was happening with web browsers? It’s worth revisiting that 90’s workstation market at this point. The market for custom RISC processors unexpectedly shrunk dramatically in the 2000’s because Intel x86 processors caught up to the performance of the more expensive RISC designs while remaining relatively low-cost. This was completely unexpected.

In fact, x86 seemed to be doomed at the turn of the century. Much was made of perceived performance boundaries of the x86 ISA; the most common prediction being a shift to “very large instruction word” (“VLIW”) architectures. There was a scramble to find a way to build a compatibility bridge from x86 to this VLIW future.

This is where the term “virtual ISA” originates.

The 1997 DAISY paper, 1998’s “Achieving high performance via co-designed virtual machines”, and 2003’s “LLVA: A Low-level Virtual Instruction Set Architecture” originated the idea of a “virtual instruction set architecture” (“virtual ISA” or “V-ISA”) to address the inflexibility and seemingly imminent obsolescence of x86. They were written in reaction to Java’s virtual machine; the LLVA paper in particular defines several useful design goals for both the “Virtual Instruction Set Computer” (“VISC”“) and “Virtual Abstract Binary Interface” (“V-ABI”) in order to differentiate their approach from the JVM. Quoting (with some paraphrasing) from the LLVA paper:

- Simple, low-level operations that can be implemented without a runtime system. To serve as a processor-level instruction set for arbitrary software and enable implementation without operating system support, the V-ISA must use simple, low-level operations that can each be mapped directly to a small number of hardware operations.

- No execution-oriented features that obscure program behavior. The V-ISA should exclude ISA features that make program analysis difficult and which can instead be managed by the translator, such as limited numbers and types of registers, a specific stack frame layout, low-level calling conventions, limited immediate fields, or low-level addressing modes.

- Portability across some family of processor designs. [A] good V-ISA design must enable some broad class of processor implementations and maintain compatibility at the level of virtual object code for all processors in that class (key challenges include endianness and pointer size).

- High-level information to support sophisticated program analysis and transformations. Such high-level information is important not only for optimizations but also for good machine code generation, e.g., effective instruction scheduling and register allocation

- Language independence. Despite including high-level information (especially type information), it is essential that the V-ISA should be completely language-independent, i.e., the types should be low-level and general enough to implement high-level language operations correctly and reasonably naturally.

- Operating system support. The V-ISA must fully support arbitrary operating systems that implement the V-ABI associated with the V-ISA.

The authors note that language platform virtual machines like Java fail to meet the first, fifth, and sixth requirement. The paper goes on to propose a design for such a virtual ISA and ABI.

Ultimately, the x86 ISA proved not to be a bottleneck. Intel engineers devised a means to virtualize the x86 ISA itself in hardware30 (a possibility hinted at in the linked papers above.) That is, a modern x86 processor decodes x86 instructions into micro-operations (or “µops”), which allows for a RISC-like hardware implementation behind the scenes. Having found success with this approach, Intel abandoned their VLIW architecture, IA-64, in favor of continuing forward with x86 processors. The workstation market, for the most part, turned into consumer hardware at this point.

A primary goal in using VMs in the manner just described is to provide platform independence. That is, the Java bytecodes can be used on any hardware platform, provided a VM is implemented for that platform. In providing this additional layer of abstraction, however, performance is typically lost because [of] inefficiencies in matching the V-ISA and the native ISA via interpretation and just-in-time (JIT) compilation.

Put a pin in that for now.

asm.js

In the early 2010’s, JavaScript experienced rapid syntax changes — additions — to remove warts from the language and make it more straightforward to use. At the same time, JIT VMs were experimenting with different approaches and heuristics in order to find the best balance between immediate execution and high performance for long-lived processes. Certain constructions in the language would opt entire functions out of optimization passes. Other, more subtle deoptimizations had to do with “hidden classes.”

Let’s take a thousand-mile high view of the optimizations that JavaScript inherited from Smalltalk VMs31.

First: “generational scavenging” garbage collection (“GC”). In broad strokes: garbage collectors must decide whether or not a given object is “alive”, remove it if it isn’t, and reclaim the memory space for future use. There are a variety of ways to achieve this. One might mark all of the “live” objects in one pass then “sweep” the dead objects to reclaim their space (“mark and sweep”). Or count the number of live references on every object — increasing and decreasing them as objects refer to them to reclaim the space immediately when the number of references drops to zero. Both of these approaches have overhead: they pause the execution of the program (either all at once or in small time slices) to do their work. They also suffer from fragmentation: occasionally a costly compaction phase is needed, since objects may be of different sizes and locations within memory.

“Scavenging” describes the approach of taking all live objects and explicitly copying them to a new region before freeing the old region. “Generational” refers to an observation from Baker, Henry Lieberman, and Carl Hewitt: most objects are short-lived. A GC algorithm can take this into account by writing all new objects into a single memory space, then only copying out objects that survive one or more runs of the GC algorithm (a “generation”) to a memory space reserved for old objects. This helps address massive mark/sweep or compaction pauses without incurring the constant overhead of reference counting (and associated difficulties with breaking circular references.)

Second: tagged pointers. This takes advantage of the “word alignment” properties for pointers on certain architectures — that is, pointers optimally point to a multiple of the processor’s native word size in bytes. On a 16-bit system, that means the three least significant bits of every pointer are unused. LISP, Smalltalk, Java, and JavaScript capitalize on this: they use these three (or more) bits as “tag” information. In particular, one bit could indicate whether the value was an object or a “primitive” value, like an integer or boolean. Smalltalk used one of the tag values to indicate that the value was a “small integer” (or “Smi”), a 31-bit integer; JavaScript inherited this property. This is handy for performance: the pointer can be used instead as an immediate value without a subsequent fetch, and most arrays can be indexed by small integers.

Third: polymorphic inline caches (“PICs” or “ICs”.) These are part of the type-feedback mechanism introduced by Self. Since any given bit of Smalltalk, Java, and JavaScript code may deal abstractly with many different types of objects, JIT VMs for these languages insert inline caches into generated code. These inline caches collect information on the “types” that pass through a given branch. If, after a few executions, the types are consistent, the JIT may optimize that branch by rewriting it from VM bytecode to native machine code, translating the inline cache calls as necessary. If the inline cache is later invalidated by a new type of object passing through the branch, the VM may execute the bytecode version of the code or, eventually, “de-optimize” and decide to remove the optimized machine code. Inline caches consult both the tag bits mentioned above as well as the object’s “hidden class”, which is a separate tag representing which fields and associated types have been added to the object since its inception (A sort of “vector in class-space”, so to speak.)

These optimizations — ubiquitous in browser engines, though in slightly different forms — made JavaScript a compelling compilation target. In 2010, work kicked off on Emscripten, a C/C++ to JavaScript compiler. In 2011, Fabrice Bellard released JSLinux: a Linux operating system and virtual machine compiled to JavaScript using a patched version of his QEMU software.

By 2013, Emscripten output was frequently out-performing idiomatic

hand-written JavaScript. This spurred the Mozilla team32 to define

and ship asm.js33.

asm.js34 relies on the following optimizations:

- Loads and stores from typed arrays are optimized (JavaScript’s standardized “buffer of bytes” object.)

- Certain bitwise operations can be used as type annotations, to be consulted

by ICs later. That is, the VM knows that

x|0always returns a 32-bit integer; likewise(x+y)|0is always 32 bits, so no need to insert overflow checks. Optimizing VMs are capable of translating these type-annotated operations directly to native ISA operations. - All operations work against a single, long-lived typed array, treating it as the addressable space for the process — which means performance doesn’t suffer from GC pauses or stutter.

asm.js specified a strict subset of JavaScript, one that could be validated

cheaply ahead of time by compatible VMs, but would run with acceptable

performance in agnostic VMs. Mozilla called this technique “ahead of time”

(“AOT”35) compilation, and released a killer demo: the Unreal

engine, 250K lines of C++, compiled to asm.js and running in the

browser at a steady framerate15.

This demo kicked off a whirlwind of discussion.

WebAssembly

By 2015, after Microsoft Edge announced asm.js support, all

interested parties concluded that it pointed in the

right direction; a language like asm.js should be encoded as

distributable bytecode. Google got on board with the effort, dropping the

NaCl/Pepper/PNaCl project, and WebAssembly was born. Chrome dropped support for

NPAPI the same year and Firefox followed suit in

2017. The window Netscape opened, NPAPI, was finally shut.

At the end of the last post, we discussed how Ritchie and company extracted C’s abstract machine in the process of porting UNIX from the PDP-11 to the Interdata 8/32. They identified both where to compromise — to pull the useful commonalities out of both — and where to abstract. They picked their targets cannily. In the process, they performed a sort of magic trick.

WebAssembly pulled the same magic trick C did: it extracted an existing, useful abstract machine definition from several concrete implementations, like finding David in the block of marble. Rather than requiring that browser vendors implement a second virtual machine, WebAssembly support could be added incrementally, sharing code between the JS and Wasm runtimes. WebAssembly machine definition supports C’s abstract machine — C, C++, Golang, and Rust can compile to this target — acting as a virtual instruction set architecture.

For its part, WebAssembly described a zero-capability system with no set system interface, making it an ideal sandbox. Riding along with the web platform meant a free ticket to just about every computer with a screen: from fridges to laptops to phones to embedded views within applications. It also meant taking on the security and isolation pressure of the web.

Like Java, WebAssembly was written for one purpose but well-adapted to serve

others. Because Wasm describes a machine, not an implementation, it is not

constrained to run only in browser JIT VMs. Wasm has been successully used

outside of the browser via runtimes like wasmtime and wasmer and as a

sandboxing intermediate representation for 3rd-party C code via

wasm2c and RLBox36. (“Has a” vs. “Is a”: WebAssembly

is not a “virtual machine” runtime, it has many indepedent virtual machine

runtimes. The performance of browser Wasm runtimes may not be indicative of

overall performance boundaries for the ISA.)

Through VM co-design, the V-ISA abstraction can be exploited to exceed native processor performance.

When you’re talking about virtualizing any component of a system, you’re doing so because you want to hide the specifics. There are a variety of reasons to hide the specifics — they might change, they might stand in the way of working through higher-level problems, or you might wish to hide the limitations of inexpensive components. In the case of Smalltalk, Java, and JavaScript, the specifics were hidden in order to present a unified view of the system to a programming language through a virtual machine.

Prior to asm.js, each of these languages presented a virtual machine to

programs that was attuned to the needs of that host language. asm.js and

WebAssembly discovered a virtual instruction set computer hiding in the

optimizing virtual machine runtimes of JavaScript. A similar virtual instruction set

computer could probably be found in the JVM or even the Strongtalk VM, but

neither of those VMs had the advantage of riding along with the browser or

being subject to the particular performance, isolation, and security

requirements of the web platform.

Today, we’re about halfway into the fictional future that Gary Bernhardt

predicted his 2014 talk, “The Birth and Death of

JavaScript”. In the talk, he describes using asm.js as the

foundation of a virtual instruction set computer, one that removes the overhead

of process isolation — the boundary between operating system and userland

process can be dissolved, improving the performance of all programs.

Bernhardt calls out that there were two important qualities that led to this predicted outcome: JavaScript had to be bad and it had to be popular. To get to a viable instruction set computer, the host language platform had to be a bridge to an install base, not the destination itself. The host had to be popular, installed everywhere. It had to be easy for users to get programs to those installations. It had to be a viable host for a virtual ISA. At the same time, the host language had to be bad enough that no one would be tempted to reverse the relation between bridge and destination.

Java, Smalltalk, and even JavaScript all confused the bridge for the destination, while LLVA proposed a bridge without a preexisting destination.

As a web platform technology, we know WebAssembly has the install base and distribution network. We know no one wants to write WebAssembly by hand. But how does WebAssembly stack up to LLVA’s design goals for a virtual ISA?

- ✅ Simple, low-level operations. Yep, WebAssembly operates in terms of functions and mathematical operations on machine types and memory.

- ✅ No execution-oriented features. The stack is not visible from within the WebAssembly process runtime, no addressing modes are specified, compilers are free to generate whichever calling convention fits their needs.

- ✅ Portability across processor designs. WebAssembly runs anywhere all of the major browsers run: at a minimum, ARM and x86 processors are supported.

- ✅ High-level information to support optimization. Loops, branches, and function information is retained, allowing for function inlining, loop unrolling, loop-invariant-code-motion, and other optimizations.

- ✅ Language independence. Any language that targets the C abstract machine can target WebAssembly. Garbage collected languages are difficult to implement efficiently on top of Wasm at the moment, but better support is coming soon.

- ❓ Operating system support. Um. Uh.

You know what? We haven’t talked at all about WebAssembly’s operating system interface. And we’re not going to, at least not in this post.

If WASM+WASI existed in 2008, we wouldn’t have needed to created Docker. That’s how important it is. Webassembly on the server is the future of computing. A standardized system interface was the missing link. Let’s hope WASI is up to the task!

- Solomon Hykes via twitter

Why would one of the inventors of Docker say such a thing? What is WASI? What is a process runtime environment? What is an ABI? Let’s find out — next time!

(Thanks to Eric Sampson and C J Silverio for reviewing drafts of this blog post, and to Luke Wagner for providing additional context.)

Bibliography, Links, Reading list

So. This post was a doozy: there’s at least a few drafts on the cutting room floor, including one brute-force attempt to walk straight up from 1945 to today. This is my first time writing anything resembling a history, let alone a history that’s within living memory for a lot of the people involved. In that spirit I tried to be as meticulous as I could about gathering and linking to references. Invariably I’ve lost a few over time. (And thanks in particular to Ron Gee for turning up “On Inheritance” and a few other papers from early 90’s Microsoft — great sleuthing!)

All of these sources were instrumental in building my mental model for where WebAssembly fits into the story of computing (and, of course, the post above.) To be honest, I think this post was so difficult to write precisely because the source material is so interesting! There are a lot of themes that one could pluck out, and I encourage you to check out as much as you can of these.

- 1945. “As we may think”, Vannevar Bush.

- 1965. “Cramming more components onto integrated circuits”, Gordon E. Moore.

- 1968. “The Mother of All Demos”, Douglas Engelbart.

- 1968. “Alan Kay shows the Rand Tablet”, Alan Kay.

- 1972. “On the Criteria To Be Used in Decomposing Systems into Modules”, D.L. Parnas.

- 1977. “Teaching Smalltalk”, Adele Goldberg and Alan Kay. Covers the curriculum for teaching Smalltalk to 7th and 8th graders.

- 1978. “Actor Systems for Real-time Computation”, Henry Givens Baker, Jr.

- 1978. “Portability of C Programs and the UNIX System”, S. C. Johnson, D. M. Ritchie.

- 1980. “Mindstorms”, Seymour Papert.

- 1981. “Design of Smalltalk”, Daniel H. H. Ingalls.

- 1983. “A real-time garbage collector based on the lifetimes of objects”, Henry Lieberman, Carl Hewitt.

- 1983. “Smalltalk-80: Bits of History, Words of Advice”, Glenn Krasner.

- 1984. “Generation Scavenging: A non-disruptive high performance storage reclamation algorithm”, David Ungar.

- 1987. “Summary of Discussions from OOPSLA-87’s Methodologies & OOP Workshop”, Norman L. Kerth, John Hogg, Lynn Stein, Harry H. Porter, III.

- 1990. “On Inheritance: What it means and how to use it”, Tony Williams.

- 1991. “Optimizing Dynamically-Typed Object-Oriented Languages With Polymorphic Inline Caches”, Urs Hölzle, Craig Chambers, David Ungar.

- 1993. “Steve Jobs and the NeXT big thing”, Randall E. Stross.

- 1993. “The Early History of Smalltalk”, Alan Kay.

- 1993. (est). “A Brief History of the Web”, Tim Berners-Lee.

- 1995. “Microsoft Announces Tools to Enable a New Generation of Interactive Multimedia Applications for The Microsoft Network”, Microsoft.

- 1995. “The Internet Tidal Wave”, Bill Gates.

- 1996. “Apple Macintosh System 7.5.3”, Apple. (Try hypercard here!)

- 1996. “The Long, Strange Trip to Java”, Patrick Naughton.

- 2022. Hackernews Comment on “The Long Strange Trip to Java”, Chuck McManis.

- 1996. “Will Java Kill Smalltalk?”, Jeff Sutherland.

- 1997. “DAISY: dynamic compilation for 100% architectural compatibility”, Kemal Ebcioğlu, Erik R. Altman.

- 1997. “The SK8 Multimedia Authoring Environment”, Apple.

- 1997. “Microsoft’s $8 Million Goodbye to Spyglass”, Peter Elstrom, Michael Mercurio.

- 1997. “Sun Sues Microsoft on Use of Java System”, John Markoff.

- 1998. “Barksdale takes on MS in trial”, Dan Goodin.

- 1998. “Achieving High Performance via Co-Designed Virtual Machines”, J. E. Smith, Tim Heil, Subramanya Sastry, Todd Bezenek.

- (It’s a postscript file, so you might look into using Ghostscript to convert it. Use

ps2pdf.)

- (It’s a postscript file, so you might look into using Ghostscript to convert it. Use

- 1998. “Happy Third Birthday, Java”, Jon Byous.

- 1999. “What Netscape learned from cross-platform software development”, Michael A. Cusumano, David B. Yoffie.

- 2000. “Sun Microsystems, Inc. History”, Jay P. Pederson.

- 2000. “How the Web was Born: The Story of the World Wide Web, p213”, James Gillies, R. Cailliau.

- 2000. “The Xerox PARC Visit”, Alex Soojung-Kim Pang, Wendy Marinaccio.

- 2001. “Micro-Operation Cache: A Power Aware Frontend for Variable Instruction Length ISA”, Baruch Solomon, Ave Mendelson, Doron Orenstien, Yoav Almog, Ronny Ronen.

- 2001. “Modern Microprocessors: A 90-Minute Guide!”, Jason Robert Carey Patterson. (updated 2016.)

- 2002. “Sun, Microsoft settle Java suit”, Stephen Shankland.

- 2002. “Vision & Reality of Hypertext and Graphical User Interfaces, Ch 2.1.10”, Matthias Müller-Prove.

- 2003. “LLVA: A Low-level Virtual Instruction Set Architecture”, Vikram Adve, Chris Lattner, Michael Brukman, Anand Shukla, Brian Gaeke.

- 2004. (est) “What’s a Megaflop?”, Andy Hertzfeld.

- 2006. (est) “The History of the Strongtalk Project”.

- 2007. “A History of Erlang”, Joe Armstrong.

- 2007. “Mark Anders Remembers Blackbird”, Tim Anderson.

- 2007. “Panasonic Takes Java To Cable TV Set-Top Box”, Junko Yoshida.

- 2008. “Introducing SquirrelFish Extreme”, Maciej Stachowiak.

- 2008. “Dalvik VM Internals”, Dan Bornstein.

- 2009. “Native Client: A Sandbox for Portable, Untrusted x86 Native Code”, Bennet Yee, David Sehr, Gregory Dardyk, J. Bradley Chen, Robert Muth, Tavis Ormandy, Shiki Okasaka, Neha Narula, Nicholas Fullagar.

- 2009. “What Server Side JavaScript needs”, Kevin Dangoor.

- 2009. “JSConf ’09: node.js”, Ryan Dahl.

- 2009. “Palm announces webOS platform”, Nilay Patel.

- 2009. “Node.js”, OpenJS Foundation.

- 2009. “Google Chrome OS Previewed”, Serdar Yegulalp.

- 2010. “Thoughts on Flash”, Steve Jobs.

- 2010. “PICing on JavaScript for fun and profit”, Chris Leary.

- 2010. [“Emscripten”][sytensity], Alon Zakai.

- 2011. “Dart Language”, Google.

- 2011. “JS Linux”, Fabrice Bellard.

- 2012. “Learnable Programming”, Bret Victor.

- 2012. “Explaining JavaScript VMs in JavaScript - Inline Caches”, Vyachyslav Egorov.

- 2012. “LLJS: Low Level JavaScript”, James Long.

- 2012. “Texas Jury Strikes Down Patent Troll’s Claim to Own the Interactive Web”, Joe Mullin.

- 2012. “Tizen”, Samsung.

- 2013. “ARM vs RISC”, Chris Siebenmann.

- 2013. “Hidden Classes vs JSPerf”, Vyachyslav Egorov.

- 2013. “Youtube: Unreal Engine 3 in Firefox with asm.js”, Mozilla, Epic Games.

- 2013. asmjs.org, Alon Zakai. The original website for the

asm.jsproject. The slides link is broken but I’ve included a link to a working version. - 2013. “Asm.js: The JavaScript Compile Target”, John Resig.

- “On Asm.js”, Steven Wittens.

- “On ‘On Asm.js’”, Dave Herman.

- “Why Asm.js bothers me”, Vyachyslav Egorov.

- “On Asm.js”, Steven Wittens.

- 2013. (est) “Electron.js”. (“Atom Shell”, at the time.)

- 2014. “Introducing Atom”, GitHub.

- 2014. “The Birth and Death of JavaScript”, Gary Bernhardt.

- 2014. “asm.js AOT compilation and startup performance”, Luke Wagner.

- 2015. “Atom Shell is now Electron”, Kevin Sawicki.

- 2015. “JerryScript”, Samsung.

- 2015. “What’s up with monomorphism?”, Vyachyslav Egorov.

- 2015. “Microsoft announces asm.js optimizations”, Luke Wagner.

- 2015. “WebAssembly”, Luke Wagner.

- “From Asm.js to WebAssembly”, Brendan Eich.

- 2015. “Smaller, Faster, Cheaper, Over: The Future of Computer Chips”, John Markoff.

- 2016. “JEP 295: Ahead-of-time Compilation”, Vladimir Kozlov, John Rose, Mikael Vidstedt.

- 2016. “Samsung’s Family Hub smart fridge is ridiculous, wonderful, and slow: The essence of Samsung”, Dieter Bohn.

- 2017. “The Xerox Alto, Smalltalk, and rewriting a running GUI”, Ken Shirriff.

- 2020. “JavaScript: The First Twenty Years”, Allen Wirfs-Brock, Brendan Eich.

- 2020. “Bits of History, Words of Advice”, Gilad Bracha.

- “The Rise and Fall of Commercial Smalltalk”, Allen Wirfs-Brock.

- 2020. Oldweb.today, Webrecorder.

- 2020. “‘C is how the computer works’ is a dangerous mindset for C Programmers”, Steve Klabnik.

- 2020. “understanding webassembly code generation throughput”, Andy Wingo.

- 2020. “Securing Firefox with WebAssembly”, Nathan Froyd.

- 2021. “WebAssembly and Back Again: Fine-Grained Sandboxing in Firefox 95”, Bobby Holley.

- “RLBox Overview”.

- 2022. “The influence of Self”, Patrick Dubroy.

- 2021. “Modifiable Software Systems: Smalltalk and HyperCard”, Josh Justice.

- 2021. “The Secret Life of SIM Cards”, Karl Koscher, Eric Butler.

- 2023. “structure and interpretation of flutter”, Andy Wingo.

- 2023. “C as Abstract Machine”, Chris Siebenmann.

- 2023. “Parsers | TLB Hit Podcast”, Chris Leary, JF Bastien.

- 2023. “Embrace the ‘Kinda’”, Dan Gohman.

- 2023. “What is WASI?”, Yoshua Wuyts.

- 2023. “a world to win: webassembly for the rest of us”, Andy Wingo.

If you’re still here, congratulations. Treat yourself to a 1987 interview with Bill Atkinson on “The Computer Chronicles”.

Footnotes

Today, a workstation may sport consumer hardware at a drastically upgraded scale (think “the mac pro vs the imac”, or “nvidia quadro vs the RTX line”.)

Now, if you’re working for IBM or Oracle, don’t fret: I haven’t forgotten you. Some of the architectures I talk about still exist, though mostly in the realm of high-performance compute!

Heck of a footnote, here: Mosaic was inspired by ViolaWWW, which was inspired by HyperCard, which was inspired by Smalltalk (and LSD), which was inspired by Engelbart’s NLS, who was inspired by the 1945 article “As We May Think” by Vannevar Bush.

What’s more: ViolaWWW saved the web from a patent troll in 2012.

Project Green’s business plan was titled “Behind the Green Door”, a reference to an adult film from 1972. Ahem. You can read about this (and more) in Patrick Naughton’s essay, “The Long, Strange Road to Java”.

This moonshot started when Patrick Naughton was nearly recruited by Steve Jobs at NeXT Computers due to his frustration with how the Sun NeWS project was being handled.

You can read Naughton’s account of the project here. You can also check out another contemporaneous account in this comment.

Java’s last resort was a bid for Time Warner’s WebTV business. According to Patrick Naughton, at the 11th hour, SGI bought their way in to the bid, undercutting Project Green.

Note: it did not prevent Microsoft from trying. They, in fact, shipped

versions of Internet Explorer with support for <script type="text/vbscript">.

So. Double-check me on this one. By the time Firefox 1.0 was released,

as far as I can tell from spelunking through the source code (in layout/html/base/src/nsObjectFrame.cpp

and modules/plugin/base/src/nsPluginHostImpl.cpp), Java ran through the plugin

system. In Netscape Communicator 3.0’s source code, however, lib/layout/layjava.c

and lib/libjava/lj_embed.c seem to indicate that <applet> tags ran Java in-process, not

through the plugin API. I know that Microsoft moved their MSJVM out of Internet Explorer

and began requiring a separate plugin download in the early 2000s, but I’m not sure

exactly when Java went from “browser built-in” to “browser plugin.”

And Microsoft wasn’t the only one! Java was so popular, in fact, that AT&T dropped Plan 9 in favor of their Inferno virtual machine operating system — patterned after Java.

“AT&T reveals plans for Java Competitor”, Jason Pontin, Infoworld Feb 96

Lest you think I am being unfair to the Microsoft of the ’90s:

Blackbird was a kind of Windows-specific internet, and was surfaced to some extent as the MSN client in Windows 95. Although the World Wide Web was already beginning to take off, Blackbird’s advocates within Microsoft considered that its superior layout capabilities would ensure its success versus HTTP-based web browsing. It was also a way to keep users hooked on Windows.

- Mark Anders, “Mark Anders remembers Blackbird”

Well, “their” browser. Internet Explorer was originally licensed from Spyglass, Inc, who had obtained a license to the source of NCSA Mosaic.

Microsoft was also cannily exploiting the terms of their contract with Spyglass: since they were releasing Internet Explorer for free, they didn’t technically need to pay any royalties on it. Spyglass sued Microsoft, who settled out of court for 8MM dollars.

Thanks, initially, to the Dalvik virtual machine, a clean-room implementation of the Java virtual machine runtime authored by Dan Bornstein at Google. This VM provided the application runtime environment for Android applications.

Oracle sued Google over this, famously.

Java fulfilled its destiny: it powered cable TV set-top boxes.

Java runs on SIM cards in your phone. No, really!

For more on AOT, see Luke Wagner’s excellent post.

Released in 2017, Java SE 9 would include ahead-of-time compilation support via GraalVM. In the same version, Java deprecated applets, later removing them in Java SE 11 in 2018.

The title of this post is a play on a previous, seminal Smalltalk book, “Smalltalk-80: Bits of history, Words of Advice.”.

Ahem:

By now the lab had acquired a SUN workstation with Smalltalk on it. But the Smalltalk was very slow—so slow that I used to take a coffee break while it was garbage collecting. To speed things up, in 1986 we ordered a Tektronix Smalltalk machine, but it had a long delivery time. […] One day I happened to show Roger Skagervall my algebra—his response was “but that’s a Prolog program.” I didn’t know what he meant, but he sat me down in front of his Prolog system and rapidly turned my little system of equations into a running Prolog program. I was amazed. This was, although I didn’t know it at the time, the first step towards Erlang.

Joe Armstrong, “A History of Erlang”:

In other words: Smalltalk’s VM was so slow that it inadvertantly inspired the creation of Erlang. Cough.

In a memo Wikipedia claims as “foundational to the design of COM and OLE”, Tony Williams wrote:

Beau Shiel likens software constructed in traditional (i.e. structured) ways to dinosaurs, whose rigid skeletal structure resists adaptation. He compares exploratory programming systems (like Smalltalk-80 and Interlisp-D) to jellyfish, noting their fluidity and pointing out that even jellyfish have their ecological niche.

[…]

Unfortunately, while a jellyfish will assume the shape of its container, you can’t build a bridge with them.

Tony Williams, “On Inheritance: What it Means and How To Use it”, 1990

Universities formed a consortium for the purposes of procuring a 3M computer. Apple, IBM, and others were in the running. Steve Jobs looked at this market after being ejected from Apple and concluded that he could address that market with a small team, unencumbered by legacy technology. He founded NeXT. (See also “What’s a megaflop” and “Steve Jobs: The Next Big Thing”, pp 58, 74, 95, and 135.)

So in a way: if there were no Smalltalk, there would be no NeXT; nobody to snipe Patrick Naughton from Sun and hence, no Java.

Adele Goldberg struck on the idea of “design templates” to address this gap: the idea of providing Smalltalk students paper templates to describe objects & their methods. A completed template held a table with three columns per row: the first column described a message the object may receive, the second column held a plain English description of what action would be carried out, and the third column held Smalltalk code written to achieve the English desription of the action. These could be handed out in varying states of completion to students (See page 22 of “Teaching Smalltalk”)

Bill Atkinson, the inventor of HyperCard was a student in the Smalltalk classroom series. Alan Kay, of the Language Research Group at Xerox PARC & inventor of Smalltalk, would advise him on Hypercard.

HyperCard also included the Hypertalk programming language, which would serve as one of the other inspirations behind JavaScript.

And lest we forget, Hypertalk would also inspire SK8.

Gilad Bracha would go on to co-author the 2nd and 3rd editions of the Java Language Specifications. He was also a major contributor to the 2nd edition of the Java Virtual Machine specification and worked on the Dart language at Google.

Notably, David Ungar is the author of “Generational Scavenging”, a paper describing generational garbage collection. This technique is key in writing high-performance garbage collected languages and builds on work by H.G. Baker, S. Ballard, S. Shirron, and others.

I’m being unfair: at least a few people liked JavaScript, myself included. But even folks who liked JavaScript during its awkward ES3/5 years have to admit, it was a bit programmer-hostile.

And they got famous cartoonist, Scott McCloud, of “Understanding Comics” fame, to draw a comic for it! Ugh, so cool.

Tizen was Samsung’s html5-powered operating system, primarily intended for their TVs and projectors (though it appeared elsewhere.) That’s right: JavaScript made its way to the Potter’s field of language platforms: cable set-top boxes.

Samsung also released a new JavaScript runtime in 2015, JerryScript, intended for the internet of things.

Dart is still a going concern! Andy Wingo writes an excellent history of Dart here. Notably, Gilad Bracha and Lars Bak were involved in the development of the language!

JF Bastien highlights some interesting parsing work on the NaCl project on his podcast. A transcript is available here.

NaCl virtualized native ISAs, but PNaCl attempted to use LLVM IR (remember that from last post?) as a transfer format instead. A quote on this from Derek Schuff, an engineer at Google advising the Ethereum project on their next distributed VM:

I’m guessing you are unfamiliar with PNaCl. This is more or less the approach taken by PNaCl; i.e. use LLVM as the starting point for a wire format. It turns out that LLVM IR/bitcode by itself is neither portable nor stable enough to be used for this purpose, and it is designed for compiler optimizations, it has a huge surface area, much more than is needed for this purpose.

PNaCl solves these problems by defining a portable target triple (an architecture called “le32” used instead of e.g. i386 or arm), a subset of LLVM IR, and a stable frozen wire format based on LLVM’s bitcode. So this approach (while not as simple as “use LLVM-IR directly”) does work. However LLVM’s IR and bitcode formats were designed (respectively) for use as a compiler IR and for temporary file serialization for link-time optimization. They were not designed for the goals we have, in particular a small compressed distribution format and fast decoding. We think we can do much better for wasm, with the experience we’ve gained from PNaCl.

This is, in fact, a lot like what the Transmeta Crusoe did: accepting x86 instructions and using “code-morphing” to turn those into VLIW instructions.

OK, I say these optimizations are inherited from Smalltalk but many of them originated in LISP and were merely adopted by Smalltalk.

More specifically, Luke Wagner, Benjamin Bouvier, Dave Herman,

Alon Zakai and the Spidermonkey team worked to ship asm.js.

I want to note that James Long was working on LLJS shortly before

asm.js came out!